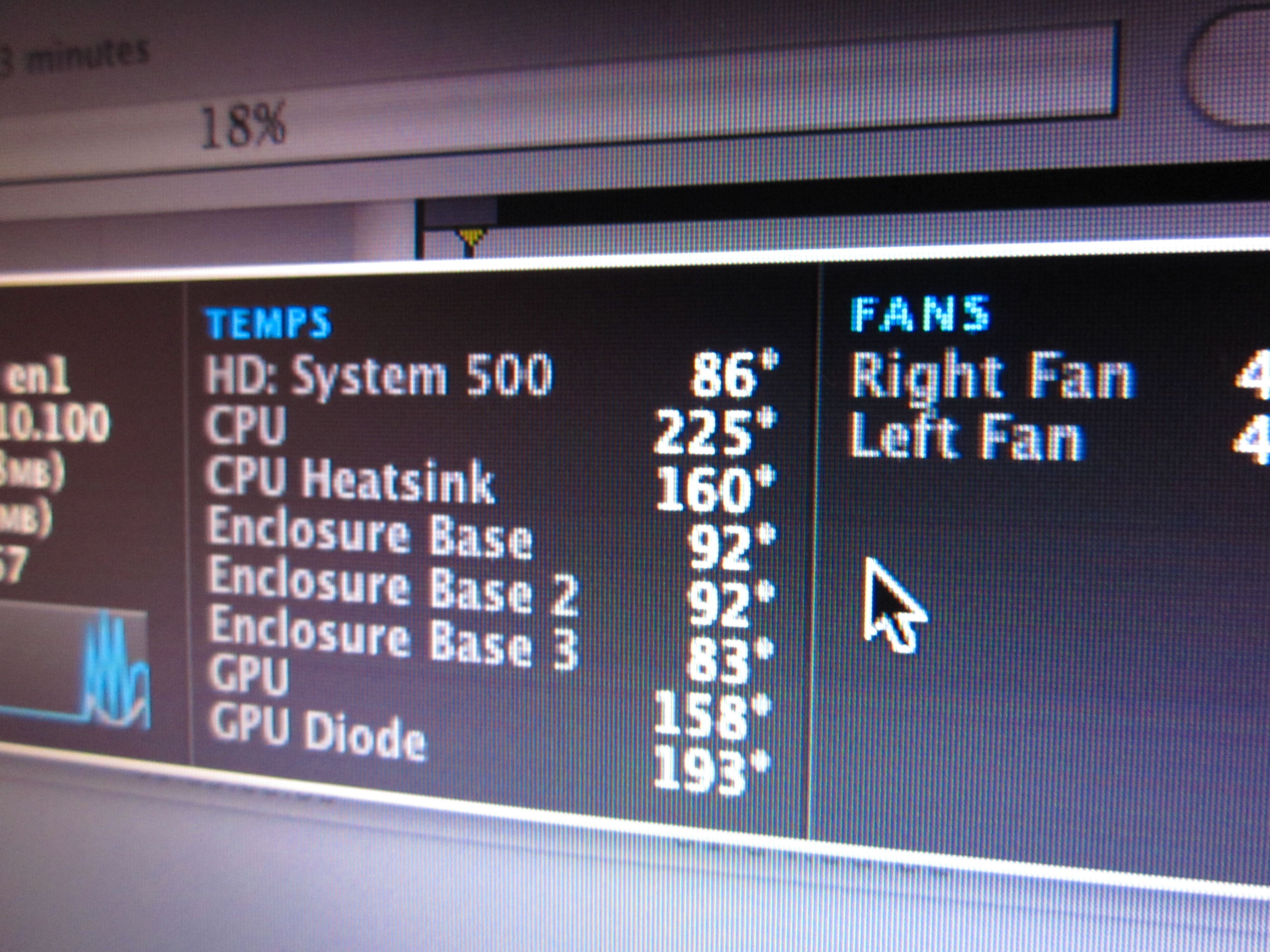

Storyboard

Arturo, Julio, Tamar and myself put together a storyboard for our Communications Lab narrative video. Click above for a semi-decipherable full-res version.

Danger Mouse

If it’s not a prank, this mouse has to be the most chilling example thus far in support of dictatorship over direct democracy in matters of design.

The open source community has delivered millions of lines of code to the world, and I love it dearly for this. But the movement seems completely devoid of aesthetic sensibility or restraint. Even the Ubuntu project, which tries so hard to get usability right (and does, to some extent) still serves up a watery approximation of Windows and an excess of configuration options.

Maybe things will improve as more non-coders learn to code. (Or maybe this will just do to code what the open-source developers did to design.)

(Via Daring Fireball.)

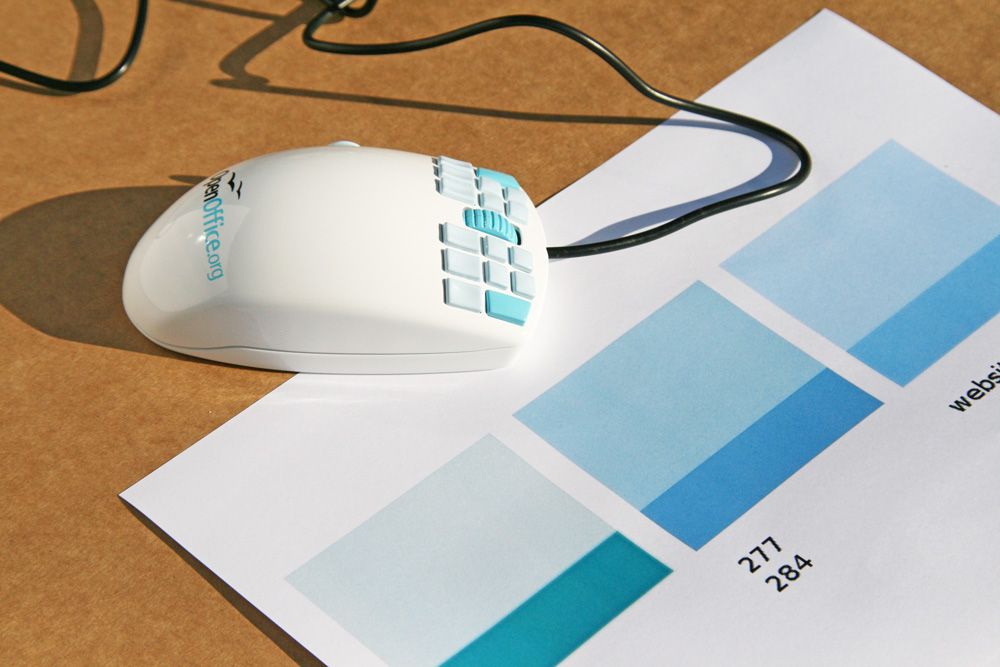

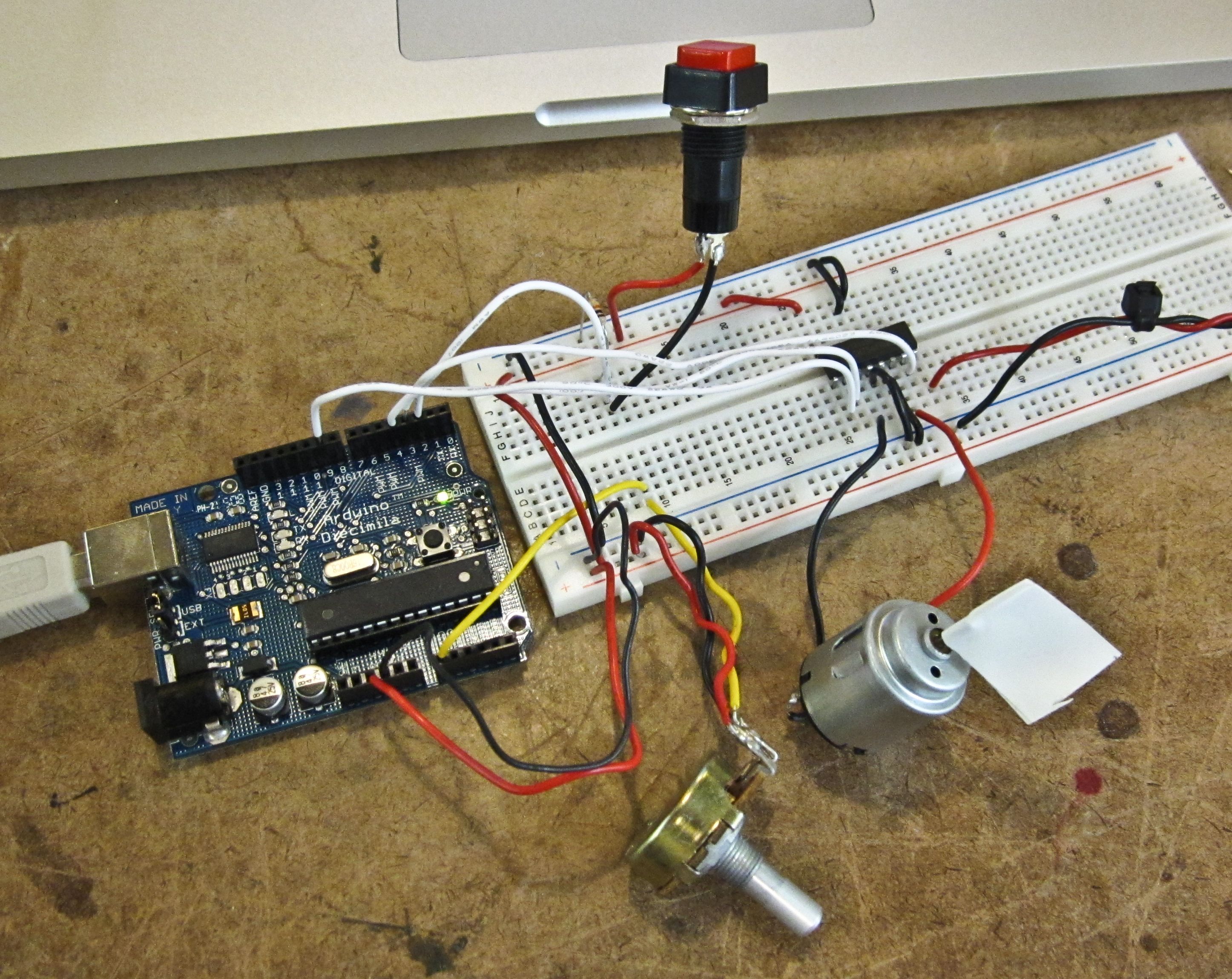

Lab: DC Motor Control

This week’s lab covered DC motor control with an H-bridge, an IC that allows to control a motor’s direction. Applying power to C1 sends power through the motor in one direction, while power to C2 sends power through the other way. Setting both C1 and C2 to high will have a braking effect on the motor.

I added a potentiometer to the lab’s circuit to allow speed control. The pot controlled a PWM signal going to the H-bridge’s enable pin. Power to the motor came courtesy of a 9V wall wart.

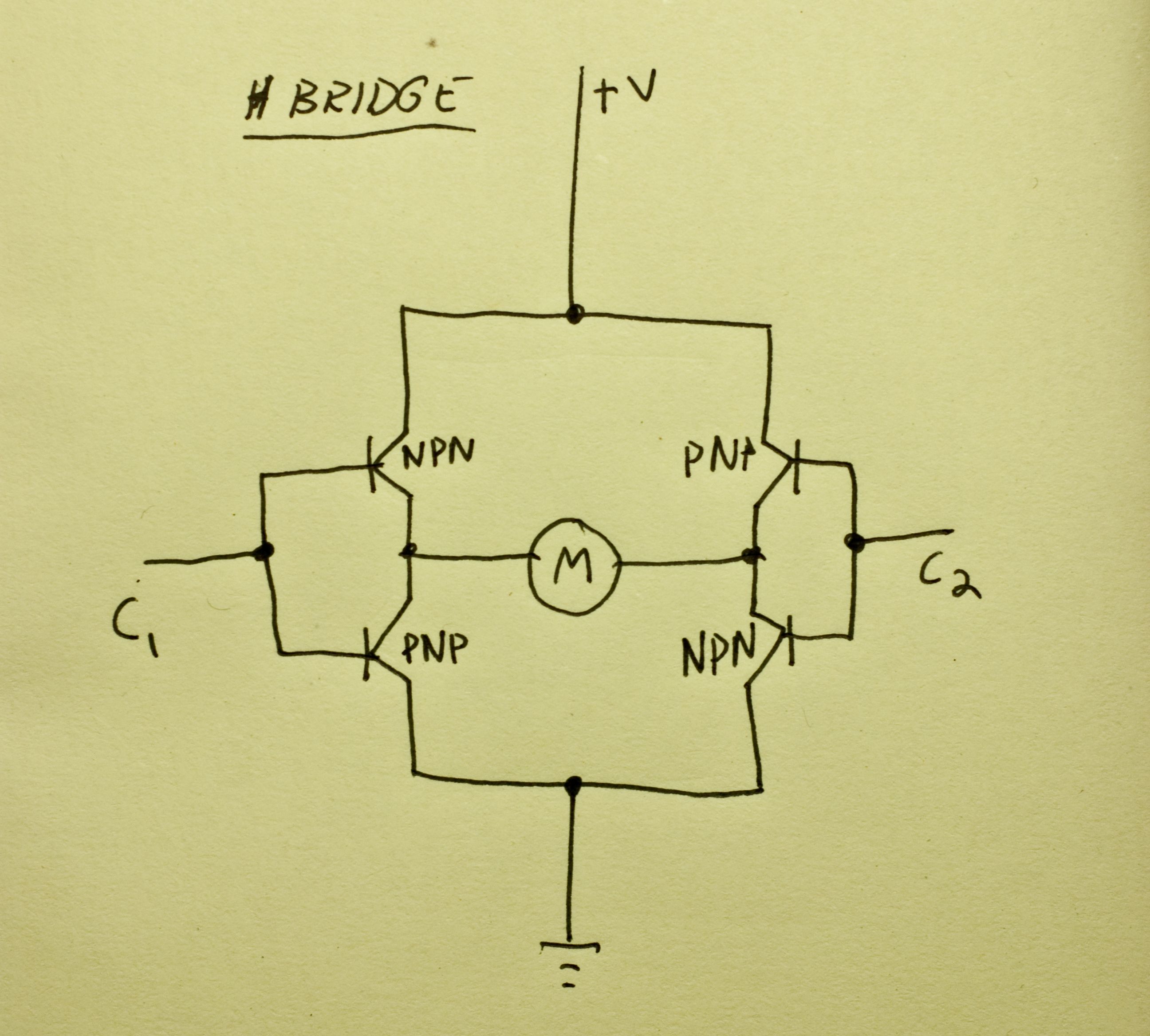

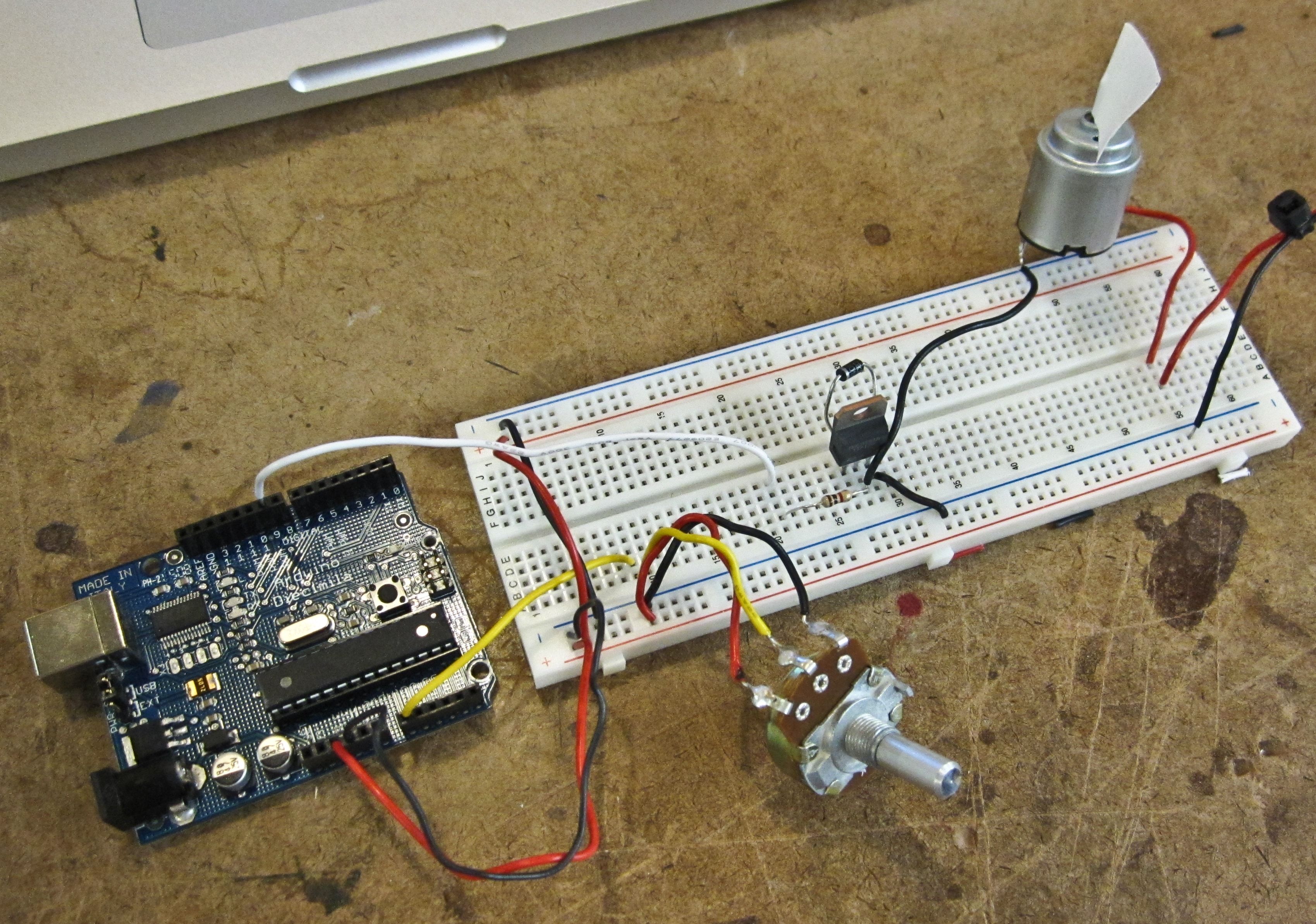

Lab: Transistors

The transistor lab explains how to control a higher voltage circuit with a lower voltage one. In this case, I used the Arduino and a transistor to start and stop a DC motor running on a separate 9V power source.

The circuit looks like this. The diode across the transistor prevents voltage from flowing back through the circuit while the motor (…now a generator) spins down after power is cut.

And a little code will set the motor starting and stopping. I initially tried to run the motor on the Arduino’s 5V power supply, but as soon as it turned the motor on the Arduino would brown out and reset. Hooking up an external 9V supply fixed this. (However, judging from the frantic sounds the motor produced, 9V might have been more than it wanted.)

Using a potentiometer to control a PWM signal to the transistor allowed for speed control: