In the course of developing Brain Radio with Arturo and Sofy, we saw the need for a means of tuning between an arbitrary, and potentially unstable number of channels. For the sake of context, Brain Radio is a head-mounted EEG-based broadcast system — everyone with a headset can tune into anyone else with a headset, and listen to sounds synthesized by that person’s brain waves.

How, exactly, would the tuning process take place? We knew a few things:

- We wanted to use a dial to leverage associations with radio / tuning / broadcast / analog.

- We would need some kind of tactile feedback, since the dial would be mounted on the headset, outside of the wearer’s field of view.

- The number of available stations / channels would vary — if three people were in range, the dial would need to be able to tune to three positions. If more channels came online or dropped out, the interface would have to adapt.

What we really wanted was a potentiometer with detents, to make it easy to click-click-click from station to station. Detents also summon a tactile delight rivaled only by toggle switches and large mechanical levers. They inflate the sense of intention associated with an action: They make you feel like you know what you’re doing.

The catch, of course, is that potentiometers with detents have a finite number of them, and they’re set at the factory. If we bought five-detent pots and ended up with six radio channels to tune between, we were SOL. What we needed was a variable detent potentiometer.

Google turned up some shady, product-less patent filings. And the PComp list confirmed that no such device existed — and then suggested something very savvy: use something else to generate the tactile feedback.

How about a vibration motor…

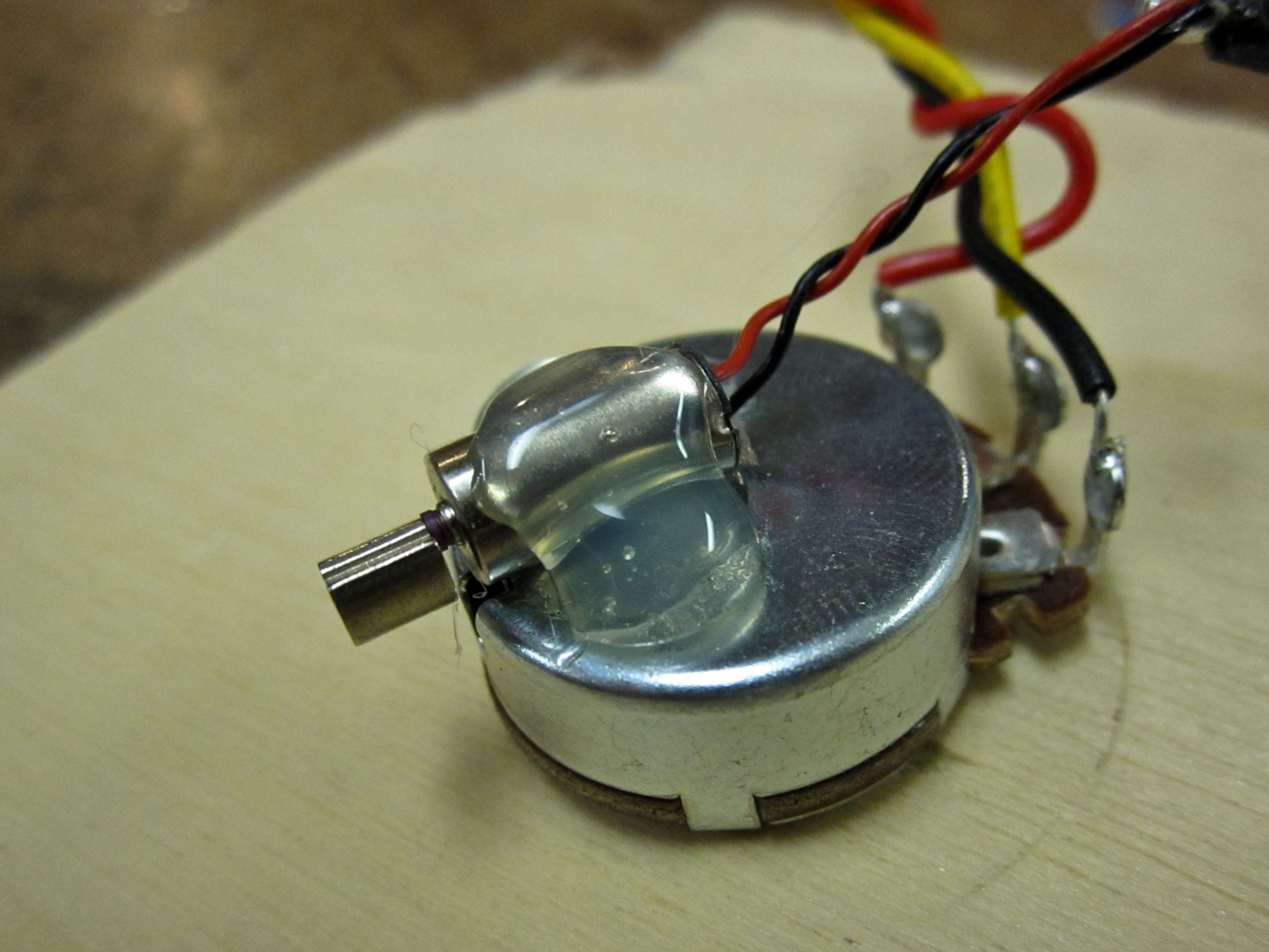

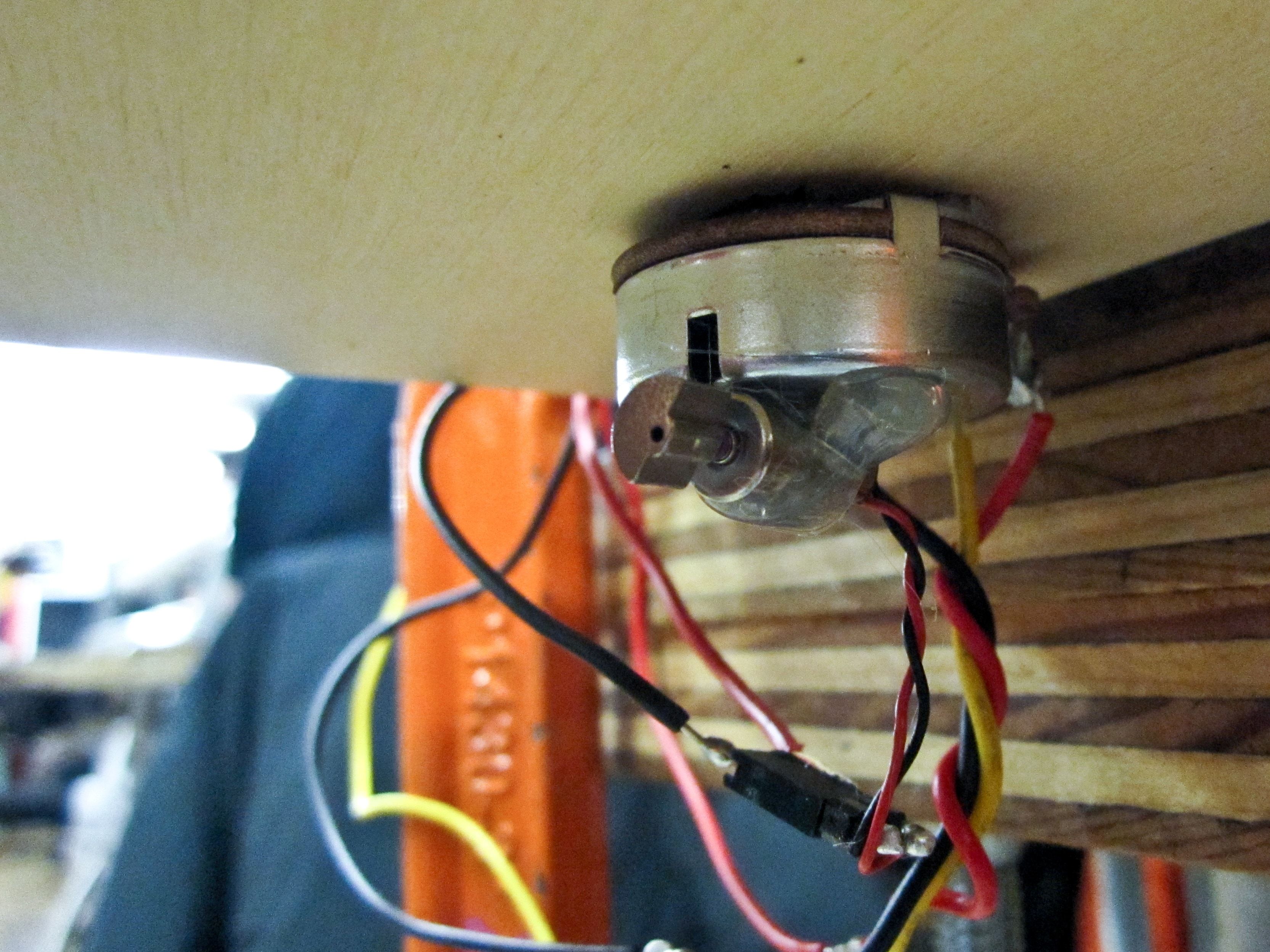

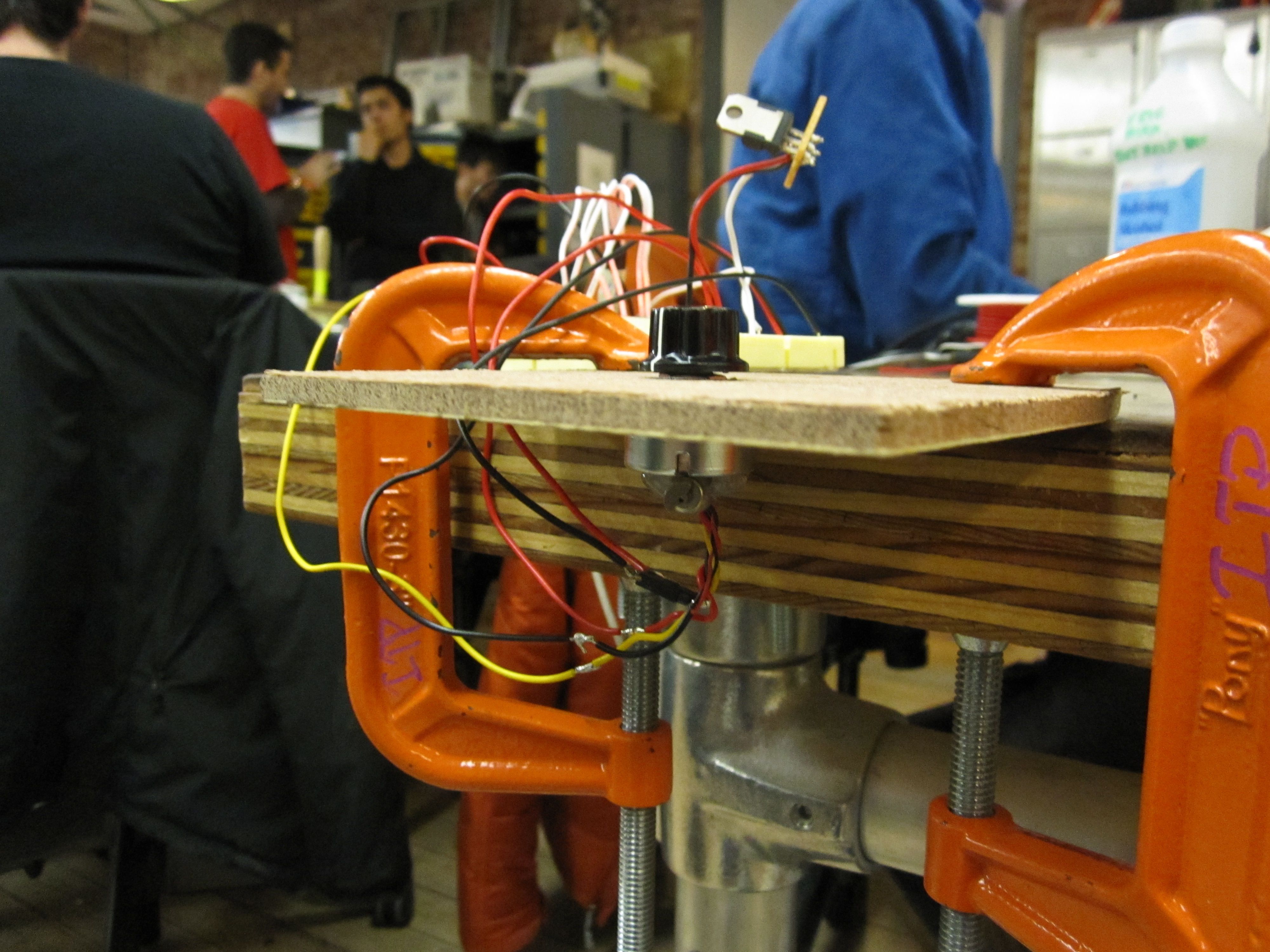

So I did exactly that. A vibration motor, some hot glue, a potentiometer, and some code all collided to create the buzz pot:

The video doesn’t really communicate the physical feedback coming through the pot when channel thresholds are crossed, but in practice it works pretty well. The number of channels is easily changed in software — and the resistance range of the potentiometer is simply divided up so a given range of values represent a single channel.

For example, a three channel setting would put channel 1 between analog values 0 and 341, channel 2 between 341 and 682, and then channel 3 between 682 and 1023. When the pot passes from one channel range to the next, the microcontroller flips on the transistor controlling power to the vibration motor for a fraction of a second, sending a mechanical buzz through the pot that lets the user feel when they’ve changed channels, even if they can’t see what they’re doing.

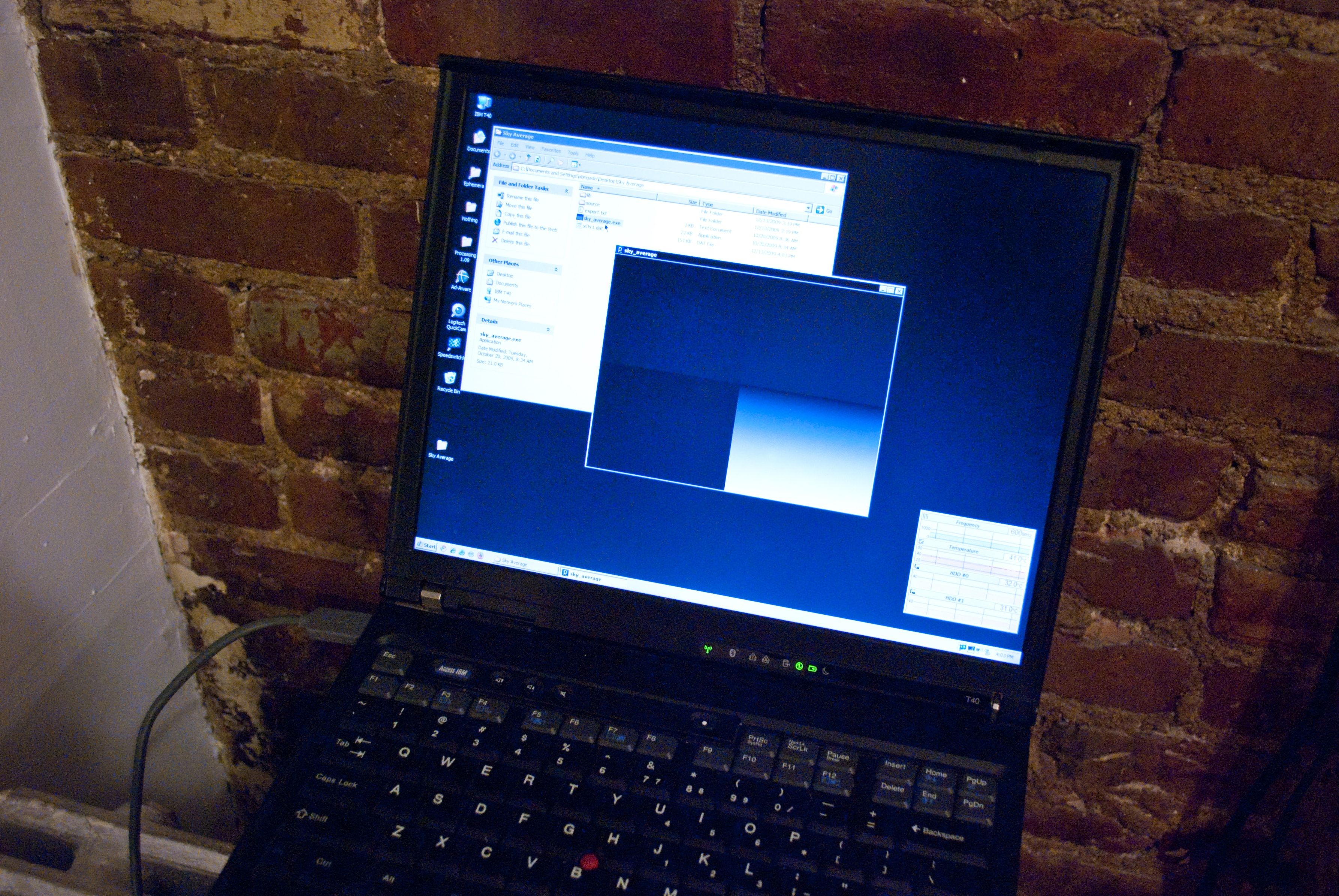

Development was relatively simple. Hot glue held up surprisingly well to the vibration motor.

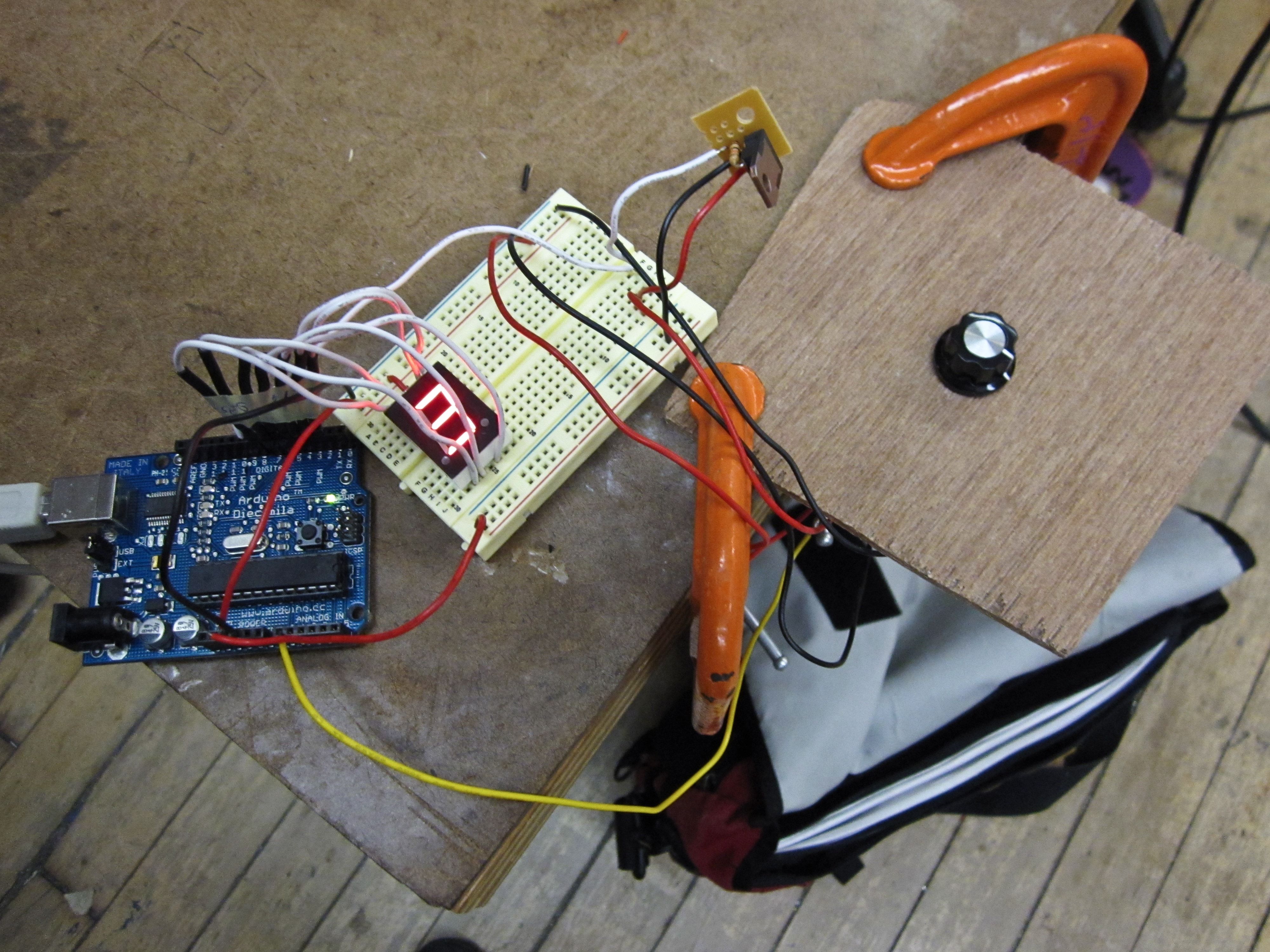

The basic test configuration includes a seven-segment LED display so I could verify when the channels changed.

Here’s the schematic. The seven-segment display adds some complexity… it’s really just for troubleshooting purposes (sending the channel status out over serial would be much simpler). Even then, a shift-register would allow for more sensible use of the Arduino’s pins if this were more than a proof of concept. The TIP 120 between the Arduino and the vibration motor is definitely overkill, I just put it in place since Brain Radio was going to have discrete power sources and it would have made sense to put the motor on the non-Arduino power supply. (Some of the graphics in the schematic were adapted from the Fritzing project.)

And finally, the code:

// Buzz Pot

// Eric Mika, 2009

// Provides tactile feedback for a potentiometer to denote changes

// from one value range to another. Ideal for situations where an unknown

// number of values must be set by a single potentiometer.

// To do:

// 1. Debounce the thresholds.

// 2. Handle fringe-case runtime channel count changes.

int vibrationPin = 9; // Turns the vibration motor on and off through a transistor.

int potPin = 0; // Reads the potentiometer.

int potValue = 0; // Stores the potentiometer value.

int channels = 10; // Number of values selectable by the pot.

int currentChannel = 0; // Starting value.

int lastChannel = 0;

int vibDuration = 200; // How long to turn the motor on when thresholds are crossed.

unsigned long vibStart = 0; // Keep track of time so we know when to turn off the motor.

// Map digital pins to their respective LEDs in the 7 segment display.

// Could use a shift register instead to save pins.

int dispA = 2;

int dispB = 3;

int dispC = 4;

int dispD = 5;

int dispE = 6;

int dispF = 7;

int dispG = 8;

void setup() {

// Set up 7 segment display pins.

pinMode(dispA, OUTPUT);

pinMode(dispB, OUTPUT);

pinMode(dispC, OUTPUT);

pinMode(dispD, OUTPUT);

pinMode(dispE, OUTPUT);

pinMode(dispF, OUTPUT);

pinMode(dispG, OUTPUT);

// Set up vibration pin.

pinMode(vibrationPin, OUTPUT);

}

void loop() {

// Read the analog input into a variable, correct for value inversion.

potValue = 1023 - analogRead(potPin);

// If 10 channels, return a number between 0 and 9...

// Constrain to catch rounding errors at the top end.

currentChannel = constrain(potValue / (1023 / channels), 0, channels - 1);

// Show the current channel number on the 7 segment display.

displayDigit(currentChannel);

// Vibrate if we change channels.

if (lastChannel != currentChannel) {

vibStart = millis();

}

// Keep vibrating for the full duration...

if ((millis() - vibStart) <= vibDuration) {

digitalWrite(vibrationPin, HIGH);

}

else {

digitalWrite(vibrationPin, LOW);

}

lastChannel = currentChannel;

}

// Shows a number on the 7 segment display.

// It's a common anode model, so LOW is actually on.

void displayDigit(int digit) {

switch (digit) {

case 0:

digitalWrite(dispA, LOW);

digitalWrite(dispB, LOW);

digitalWrite(dispC, LOW);

digitalWrite(dispD, LOW);

digitalWrite(dispE, LOW);

digitalWrite(dispF, LOW);

digitalWrite(dispG, HIGH);

break;

case 1:

digitalWrite(dispA, HIGH);

digitalWrite(dispB, LOW);

digitalWrite(dispC, LOW);

digitalWrite(dispD, HIGH);

digitalWrite(dispE, HIGH);

digitalWrite(dispF, HIGH);

digitalWrite(dispG, HIGH);

break;

case 2:

digitalWrite(dispA, LOW);

digitalWrite(dispB, LOW);

digitalWrite(dispC, HIGH);

digitalWrite(dispD, LOW);

digitalWrite(dispE, LOW);

digitalWrite(dispF, HIGH);

digitalWrite(dispG, LOW);

break;

case 3:

digitalWrite(dispA, LOW);

digitalWrite(dispB, LOW);

digitalWrite(dispC, LOW);

digitalWrite(dispD, LOW);

digitalWrite(dispE, HIGH);

digitalWrite(dispF, HIGH);

digitalWrite(dispG, LOW);

break;

case 4:

digitalWrite(dispA, HIGH);

digitalWrite(dispB, LOW);

digitalWrite(dispC, LOW);

digitalWrite(dispD, HIGH);

digitalWrite(dispE, HIGH);

digitalWrite(dispF, LOW);

digitalWrite(dispG, LOW);

break;

case 5:

digitalWrite(dispA, LOW);

digitalWrite(dispB, HIGH);

digitalWrite(dispC, LOW);

digitalWrite(dispD, LOW);

digitalWrite(dispE, HIGH);

digitalWrite(dispF, LOW);

digitalWrite(dispG, LOW);

break;

case 6:

digitalWrite(dispA, LOW);

digitalWrite(dispB, HIGH);

digitalWrite(dispC, LOW);

digitalWrite(dispD, LOW);

digitalWrite(dispE, LOW);

digitalWrite(dispF, LOW);

digitalWrite(dispG, LOW);

break;

case 7:

digitalWrite(dispA, LOW);

digitalWrite(dispB, LOW);

digitalWrite(dispC, LOW);

digitalWrite(dispD, HIGH);

digitalWrite(dispE, HIGH);

digitalWrite(dispF, HIGH);

digitalWrite(dispG, HIGH);

break;

case 8:

digitalWrite(dispA, LOW);

digitalWrite(dispB, LOW);

digitalWrite(dispC, LOW);

digitalWrite(dispD, LOW);

digitalWrite(dispE, LOW);

digitalWrite(dispF, LOW);

digitalWrite(dispG, LOW);

break;

case 9:

digitalWrite(dispA, LOW);

digitalWrite(dispB, LOW);

digitalWrite(dispC, LOW);

digitalWrite(dispD, LOW);

digitalWrite(dispE, HIGH);

digitalWrite(dispF, LOW);

digitalWrite(dispG, LOW);

break;

default:

digitalWrite(dispA, HIGH);

digitalWrite(dispB, HIGH);

digitalWrite(dispC, HIGH);

digitalWrite(dispD, HIGH);

digitalWrite(dispE, HIGH);

digitalWrite(dispF, HIGH);

digitalWrite(dispG, HIGH);

}

}