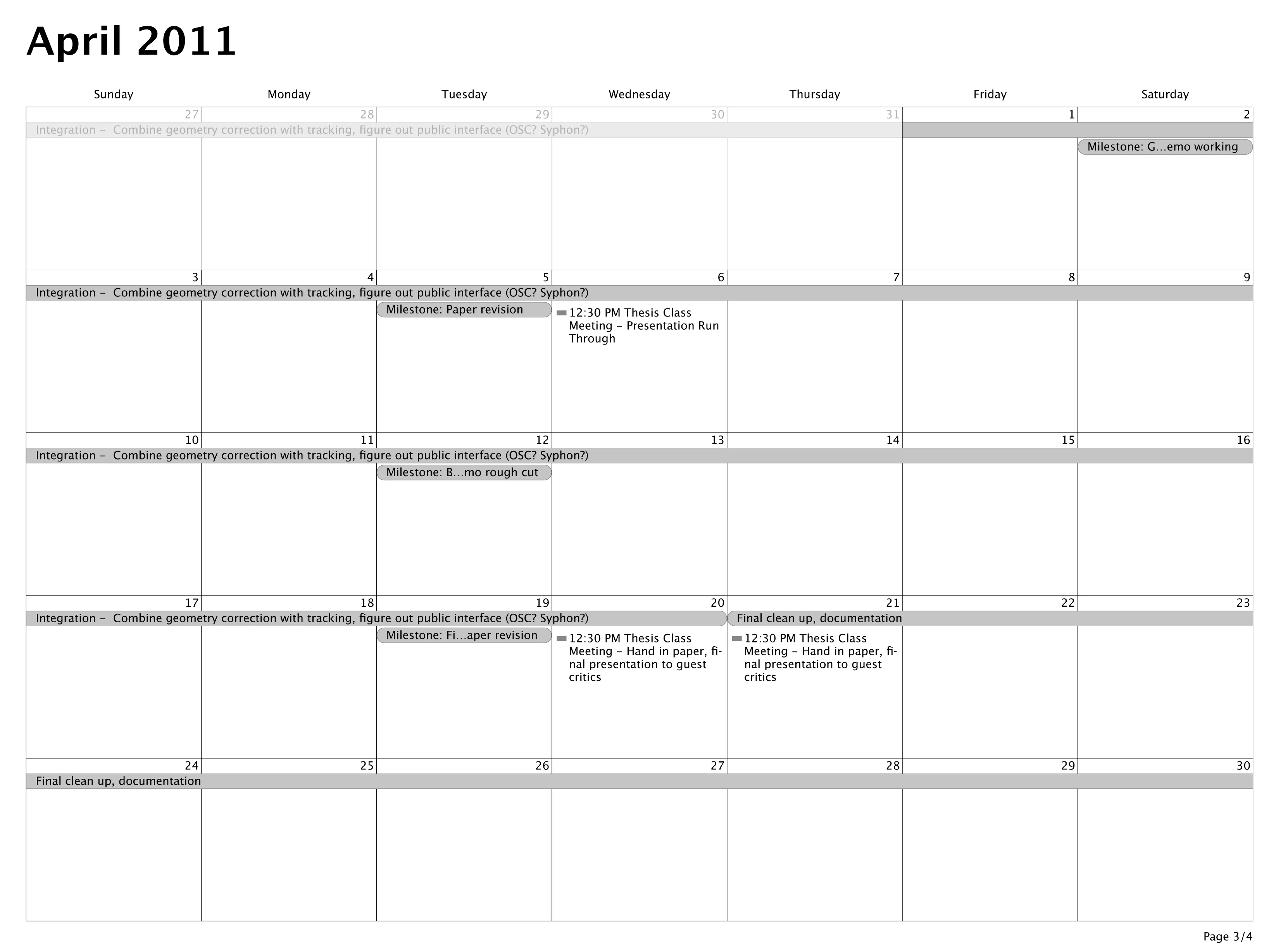

Concept

Flapping Toasters is an absurdist reconsideration of the classic screensaver Flying Toasters. You embody the toaster, flapping your arms to stay aloft, clapping to release toast, and tilting to roll away from danger. Created in collaboration with Michael Edgcumbe.

Video

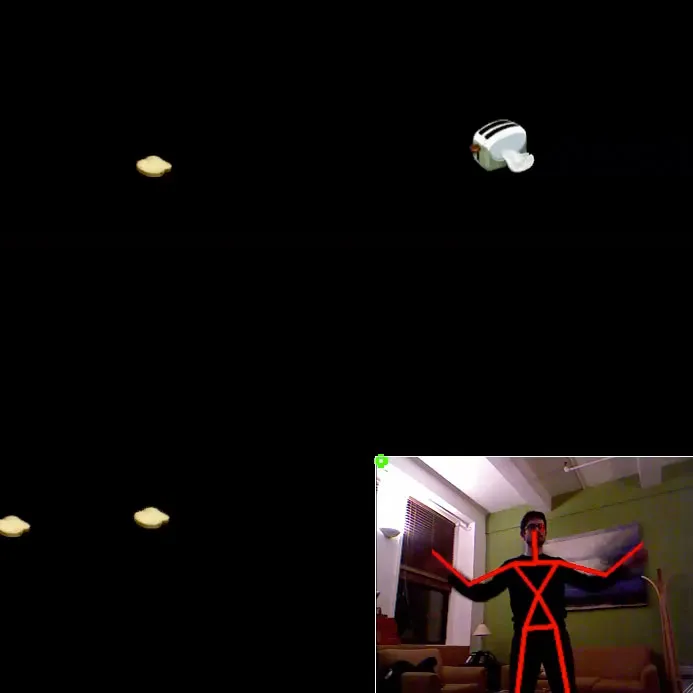

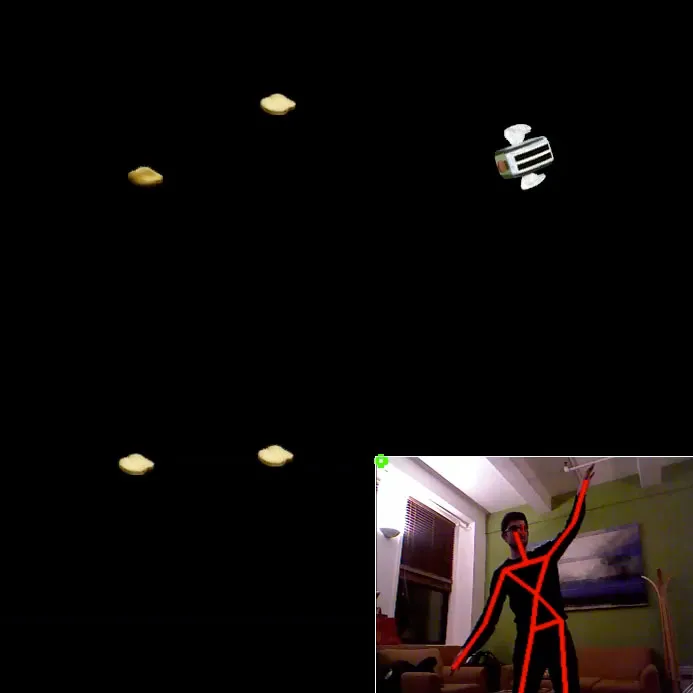

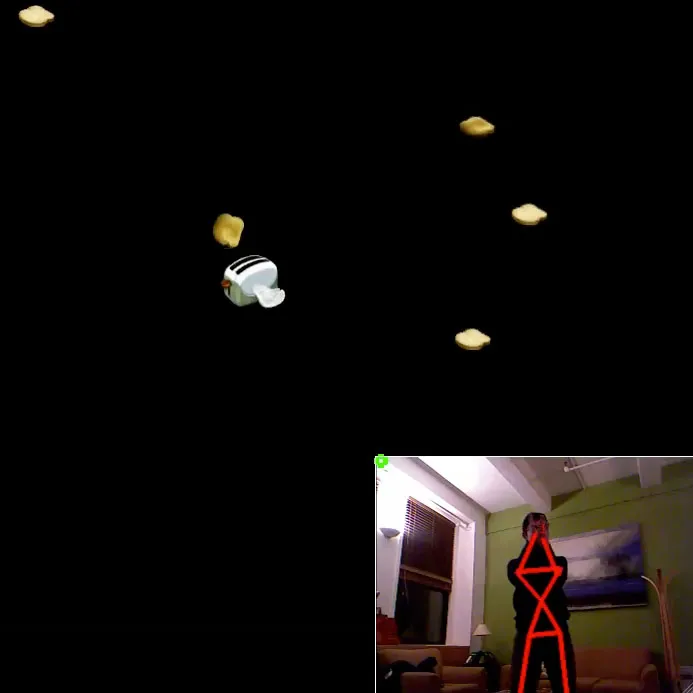

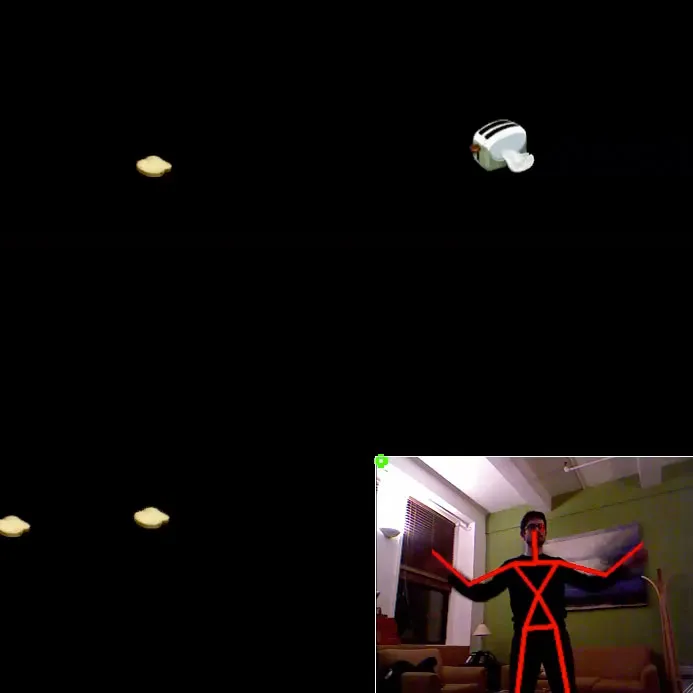

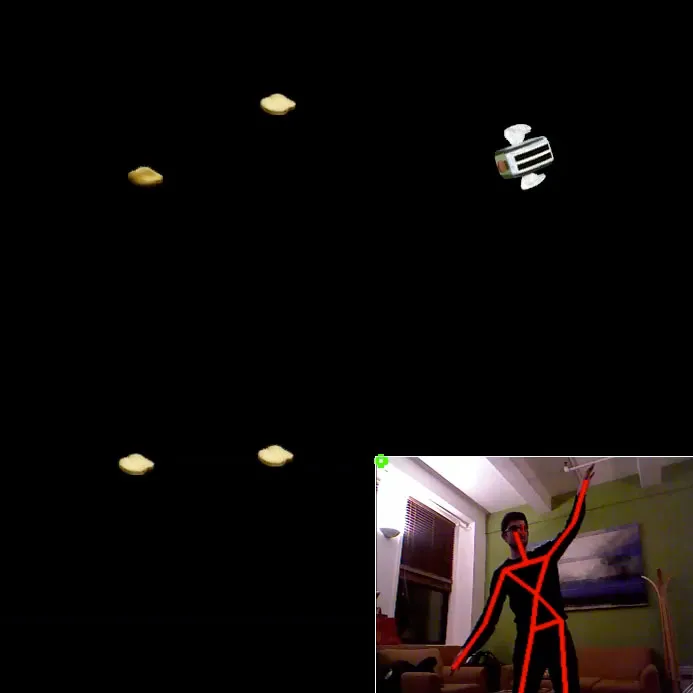

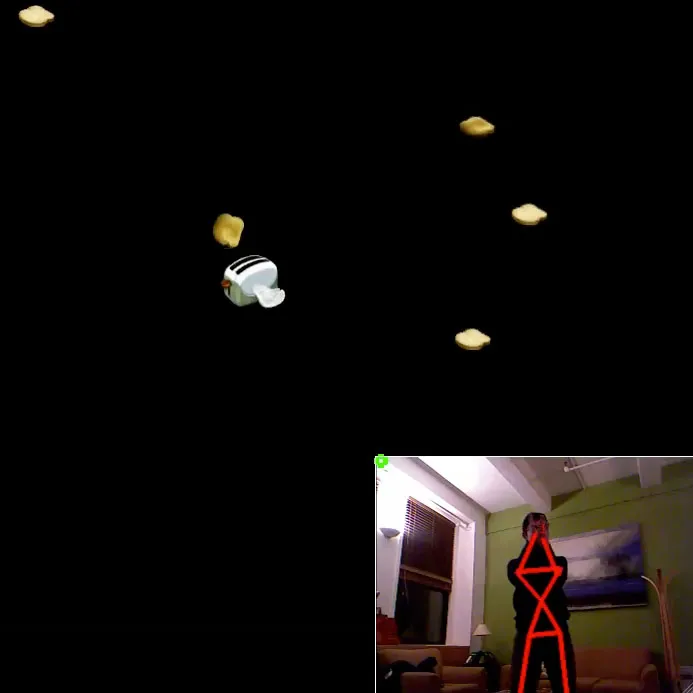

Screenshots

Clockwise from top left: Basic flapping / Multi-player / Tilting to roll / Clapping to release toast.

Background

The screensaver is forbidden territory for those seeking to elevate the status of new media art. A screensaver is lowbrow, unserious, and fetishizes aesthetics over content. I’ve explored this hostility a bit further in another post. At the same time, early screensavers represent a raw, unpretentious play with the screen’s possibilities combined with a novel set of constraints. (E.g. a screensaver must have no static content, run for an unlimited duration, etc.)

Michael and I decided to embrace the kitsch, and compound the absurdity of the ever-criticized screen saver with the hype and widespread obsession with the Kinect. Beyond trying to show some love for the screensaver, we were conscious of how many camera + presence driven “interactive artworks” present an interaction model that reduces the participant to maniacal arm waving and overwrought gestures designed to satisfy a finicky computer vision algorithm. We wanted to poke some fun at this trope by creating an interaction model that explicitly demands that the participant is reduced to crazed arm-flapping.

Process / Implementation

We built the software in openFrameworks. Since we wanted skeleton tracking, we used the ofxOpenNI plugin instead of ofxKinect (since the latter only provides RGB-D data).

Detecting flaps was a bit trickier than expected, since there’s an issue of ranging, direction detection, correlating the animation to the arm position, direction reversal detection, etc. We ended up solving these issues with brute force by tracking velocity of the hand points, filtering heavily, and setting up a dynamic bounding system that would allow people with various degrees of enthusiasm / gesticulation to fly successfully.

We also added support for rolling in both directions. The player initiates a roll by tilting their arms in ether direction — we used an angle threshold on an imaginary line drawn between hand points to trigger it.

Life as a toaster would be unfulfilling without the opportunity to toast bread, so Michael implemented hand clap detection to trigger the release of golden-brown toast.

Ideally there would be some kind of system for recording sample motions, saving them, and then automatically firing an event when a similar motion is detected along with a confidence value. I’m sure this kind of thing exists on industrial-strength Kinect development platforms (and must have existed somewhere in the Wii’s development stack for ages). Would be great to have a similar library for OF or Processing… something for the to-do list.

OpenNI makes tracking multiple players relatively trivial, so we implemented support for up to 15 simultaneous flappers (OpenNI’s internal limit).

The most recent version of the ofxOpenNI plugin includes support for OpenNI’s native hand tracking algorithms. They weren’t available during our initial development, so we used the raw skeleton data instead. These algorithms seem a bit more reliable for locating hands then the skeleton points alone, particularly when hands cross the torso, go out of frame, and so on — essentially, it uses a more generous set of inferences to give you the best guess about hand position.

Since flapping toasters is all about hand tracking, it sounds like this new feature would be great. So, I tried a quick reimplementation of Flapping Toasters using these points instead of the end of the lower arm joint. It turned out not to work with more than one player, since I couldn’t find a way to associate two hands with a single person. For example, if three hands are detected, I had no way of knowing which two belonged to one person, and to whom the third might belong. There might be a way, but it wasn’t immediately obvious from the API.

The graphics are scraped sprites from After Dark’s original Flying Toasters. We created PNGs from each frame of animation and re-implemented the basic design of the screensaver.

The final element, which was surprisingly the hardest to get right, was the physics simulation. It’s your basic gravity / acceleration / velocity simulation, but getting the power of a flap just right was tricky. It still doesn’t feel quite like I imagined. Flapping is a bit too much work, but it’s also too easy to flap all the way off the top of the screen. The solution to this probably involves spending a bit more times with the sliders to get the numbers just right, as well as taking into account the vigor of the flap. (E.g. use some combination of average arm velocity during the down-stroke and the relative size of the flap as a coefficient to the flap power value.)

After Dark’s version of flying toasters included two possible soundtracks — Wagner’s Ride of the Valkyries, and a slightly less compelling theme song with karaoke-style lyrics. Michael and I opted for Wagner, and rendered out a midi version of the song as a WAV for use in openFrameworks. I’d have preferred to make the recording using the midi synths from a Macintosh of the appropriate vintage, but we just couldn’t get access to one in time for the class deadline.

Improvements

After flapping for a few minutes, most people ask what they’re trying to do. We haven’t implemented any kind of game logic — it’s just you, the toaster, and the joy of flap-induced flight. In one sense it’s nice to keep things minimal. But if we really wanted a game dynamic, there are a bunch of possibilities.

Some kind of collision detection between players could make things more interesting. You’re also given an unlimited supply of toast. Perhaps you could try to “catch” un-toasted pieces of bread, carry them for an amount of time (toasting them in the process) before clapping to release. Carry them for too long, and you’ll burn the toast. (The horror!)

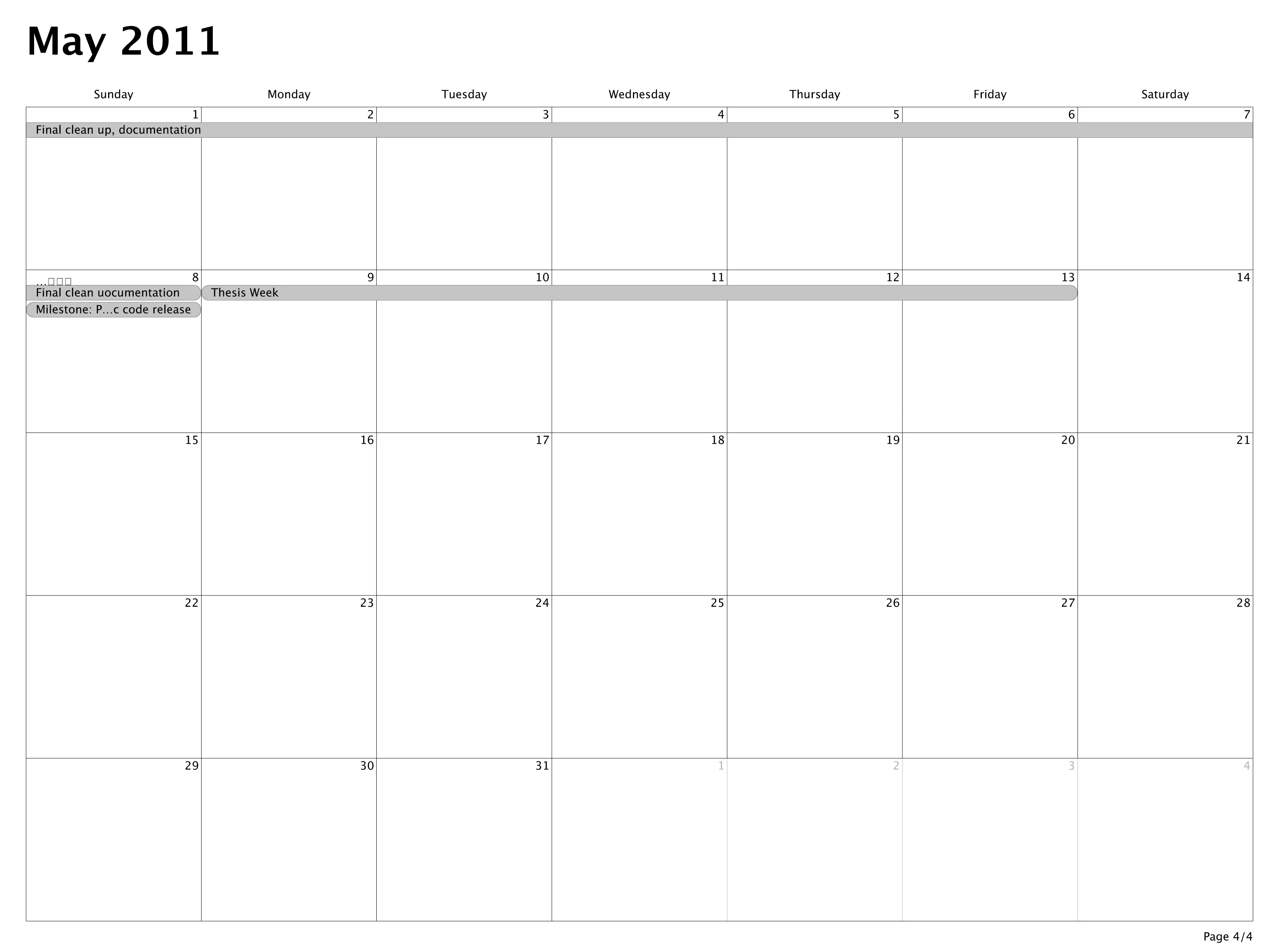

Download / Source Code

The application was written in openFrameworks. The source code is available on GitHub. See the repository’s readme file for additional notes on development / compilation. A Mac OS binary is also included in the GitHub repo.

]]>

Photo:

Photo:

]]>

]]>